In a plot twist that feels almost too perfect for April 1st, Anthropic — one of the leading companies in AI industry — accidentally leaked more than 500,000 lines of its own source code.

Not through a cyberattack. Not through some elite hacker group. But through something far more relatable: a packaging mistake.

Somewhere in a release pipeline, a .map file slipped into production. That file pointed to an internal archive. That archive contained nearly 2,000 files and over half a million lines of code. And within hours, the internet did what it always does best: it made sure the code would never disappear again.

Anthropic later clarified that no customer data, API keys, or model weights were exposed. Which is technically reassuring, but also missing the main point.

Because what leaked was not just code. It was the blueprint. Inside the exposed files, developers found the actual structure of a modern AI coding system. Not just prompts, not just responses, but the entire orchestration layer: tool chains, memory systems, multi-agent coordination, and internal workflows that turn a language model into something that feels closer to an operating system than a chatbot.

In other words, Anthropic didn’t leak “Claude.”

They leaked how Claude actually works.

Unfortunately, I cannot show you any of that code here. My lawyer specifically told me, “you will be in jail,” and to be honest, I am not ready to fight a multi-billion-dollar AI company on your behalf. Also, please pay your debts before I sue you personally. This is unrelated, but still important.

What we can say, however, is that the leaked codebase is heavily written in TypeScript. This is bad news for Python fans, and also for me personally as a Kotlin developer. It is a difficult time for all of us.

As the story kept spreading, the internet quickly found its next favorite subplot:

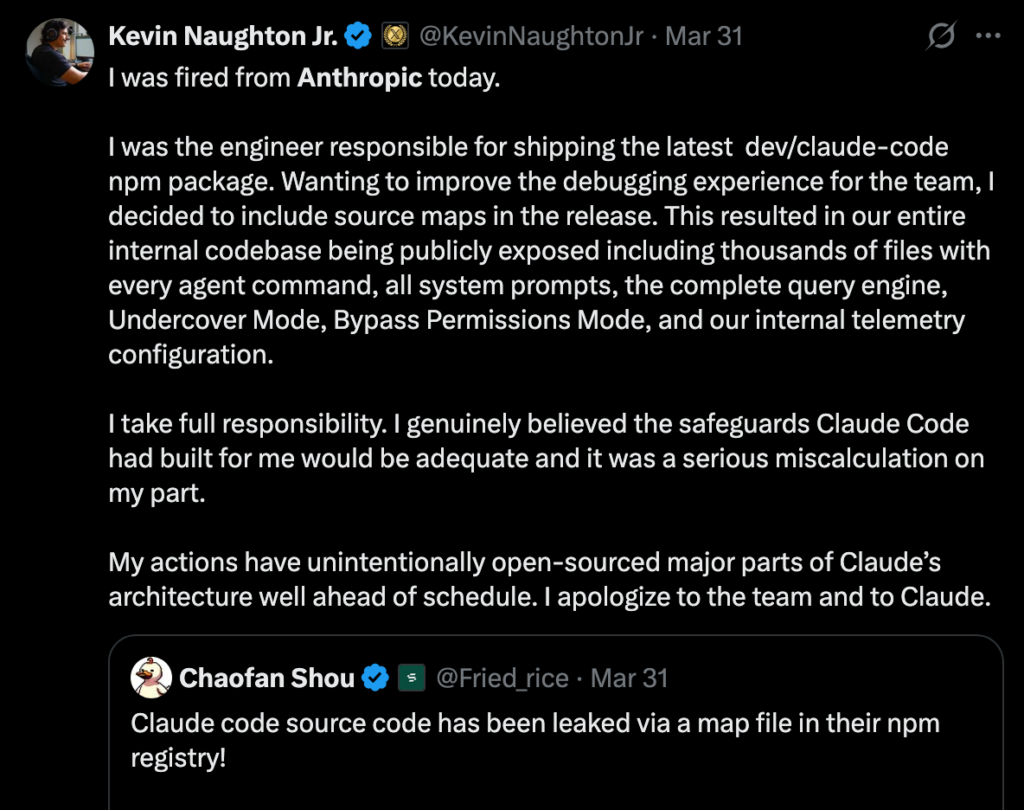

A viral post by Kevin Naughton Jr. claiming he was the engineer behind the incident and had just been fired for accidentally exposing the entire Claude Code system through a source map file. The post, which described leaking everything from internal prompts to “bypass permission modes,” was dramatic enough to make half of tech Twitter panic and the other half laugh, before people started realizing it might not be entirely serious — or at least not entirely verified. (X (formerly Twitter))

In typical internet fashion, jokes followed immediately, with many users saying they “hope Kevin is doing fine,” while others pointed out that if this were real, getting fired might actually be the least of his concerns. Either way, whether it was an April Fools’ joke, satire, or just perfectly timed chaos, Kevin somehow became one of the most talked-about “engineers” of the leak — and possibly the only person in this story who managed to go viral without writing a single line of TypeScript.

Beyond the jokes, the technical implications are serious.

The leak revealed that modern AI systems are no longer just models. They are layered systems built around models. The real intelligence is distributed across pipelines, workflows, and persistent context management. The model is just one part of the machine.

And now that machine has been exposed.

Developers quickly discovered features that had not even been officially released yet. There were hints of always-on agents capable of running in the background, fixing code, and continuing tasks without direct user input. There were also experimental concepts, including a Tamagotchi-like assistant living inside the terminal, which is either innovative or slightly concerning depending on how much sleep you got last night.

More interestingly, the code revealed how Claude monitors user interactions. It can detect frustration signals, track behavioral patterns, and log interaction data to improve the system over time. Not through advanced AI, but sometimes through simple techniques like pattern matching.

Which means that while users were trying to debug their code, the system might have been quietly noticing how frustrated they were about it.

This raises a larger question that goes beyond Anthropic.

If this is how one of the most safety-focused AI companies operates, what does the rest of the industry look like?

From a security perspective, the incident is even more revealing. The leak was not caused by a failure in the model. It was not even a vulnerability in the application logic. It was a failure in the release pipeline.

A single misconfigured packaging step exposed months, possibly years, of engineering work.

This highlights a growing reality in AI development. The biggest risks are no longer in the intelligence layer. They are in the infrastructure around it. Build systems, deployment pipelines, and configuration management are now just as critical as model safety. And the consequences are immediate.

Once the code hit GitHub, it spread rapidly. Copies were forked, mirrored, and even rewritten in other programming languages to avoid takedowns. At that point, containment was no longer a technical problem. It was an internet problem.

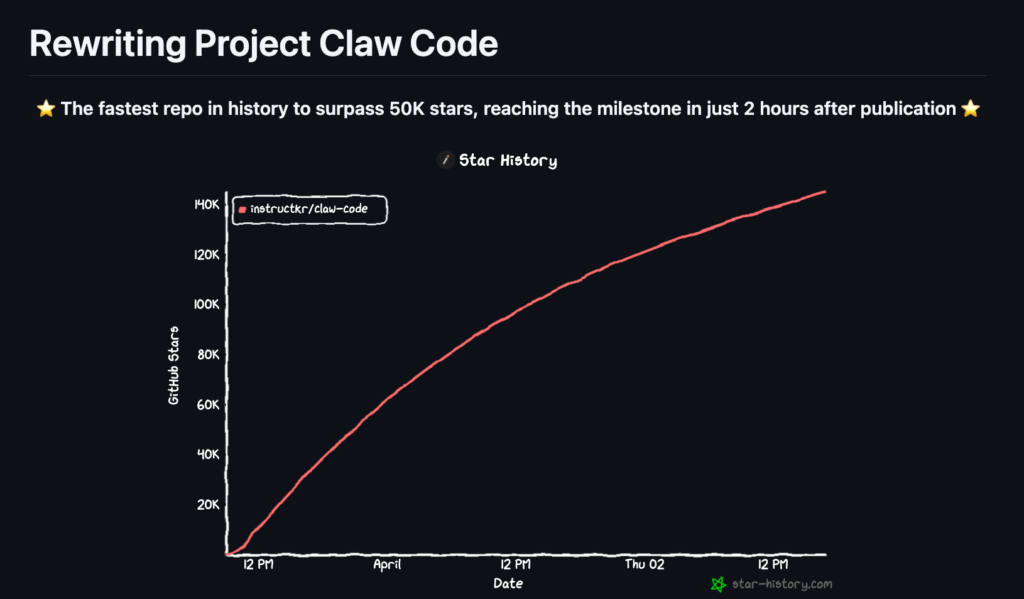

Within hours, a new repository called Claw Code appeared, not just mirroring the leaked system but attempting to rebuild and improve it, and it didn’t just go viral — it broke records. The repo became the fastest in GitHub history to surpass 100,000 stars, with developers rushing in not to archive the leak, but to rewrite the entire AI harness properly, this time in Rust, because apparently TypeScript trauma was not enough for one week.

Competitors now have access to insights that would normally take years to develop. Not the model itself, but the architecture that makes the model useful. The workflows, the orchestration, the hidden layer that turns AI into a product.

There is also, of course, the more cynical interpretation.

Within days, thousands of developers who had never used Claude Code were suddenly analyzing it, discussing it, and building their own versions. The level of attention is something most companies spend millions trying to achieve.

So naturally, the question had to be asked.

Was this just an accident, or the most effective developer marketing campaign of 2026?

There is no evidence to suggest it was intentional. But if it were, it would be one of the most effective “accidents” in tech history.

Either way, one thing is now clear.

The future of AI will not be decided by who has the best model.

It will be decided by who builds the best system around it.

And for a brief moment, that system was open to everyone.

In the Finall one line: As the internet saying, “thanks for the leak, we’ll take it from here.”

Sources

https://www.theguardian.com/technology/2026/apr/01/anthropic-claudes-code-leaks-ai

https://www.businessinsider.com/anthropic-claude-code-data-leak-developers-2026-4

https://www.theverge.com/ai-artificial-intelligence/904776/anthropic-claude-source-code-leak

https://www.axios.com/2026/03/31/anthropic-leaked-source-code-ai